Trends Shaping the Future of Innovation in 2026

By Minal Hossan

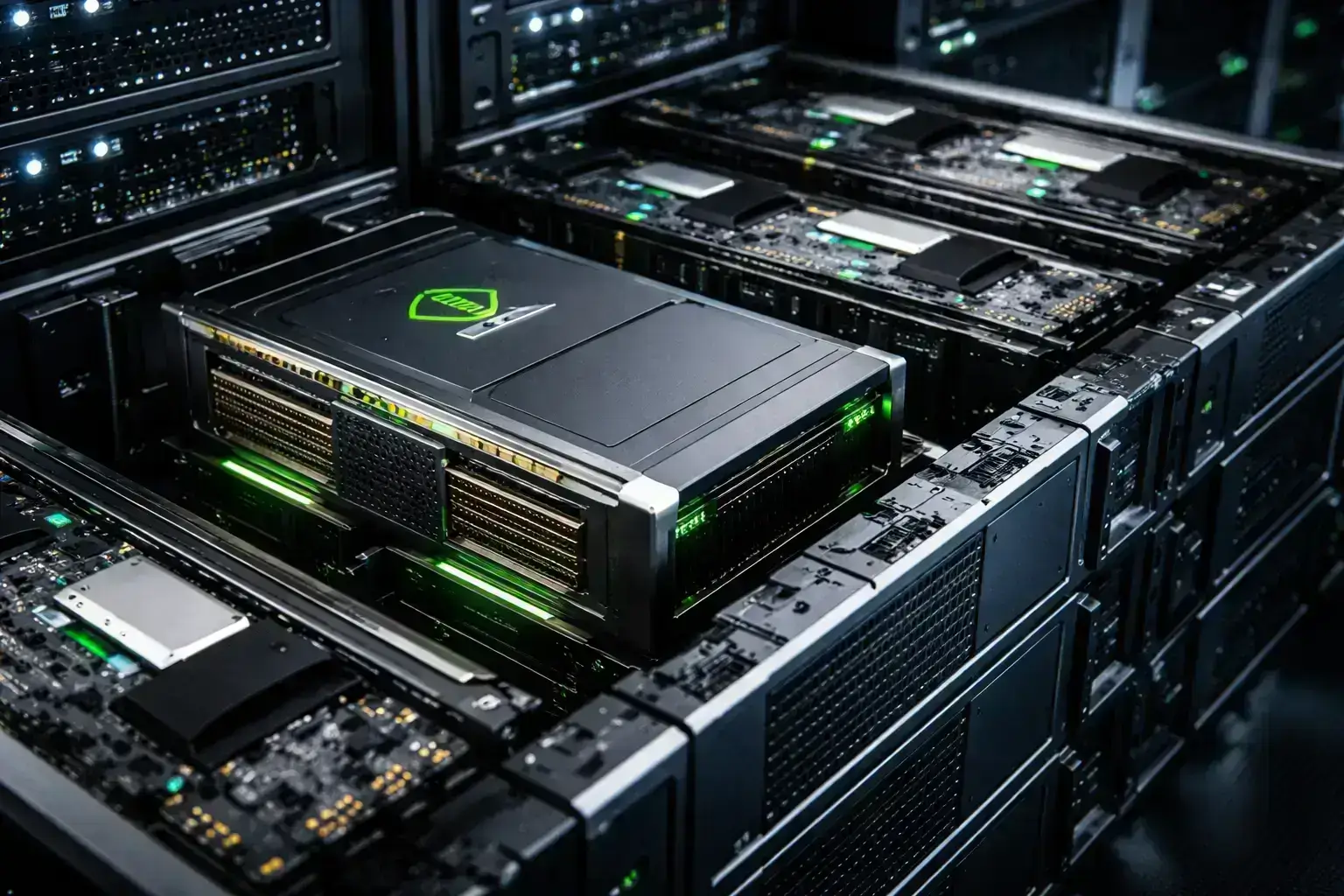

On February 22, 2026, Nvidia unveiled its latest AI GPU, marking a significant milestone in machine learning technology. This new GPU promises unparalleled performance, energy efficiency, and scalability, making it an essential tool for AI researchers, developers, and businesses. With AI workloads becoming increasingly complex, this GPU is designed to tackle the growing demands of modern AI applications, positioning Nvidia at the forefront of the industry. Nvidia Next-Gen AI GPU

Nvidia’s GPUs have been instrumental in advancing AI and machine learning over the past decade. Initially popular for gaming, the company’s hardware quickly became the backbone of AI research, powering some of the world’s most complex deep learning models. The launch of the A100 and H100 GPUs revolutionized AI training by accelerating model training times and offering unprecedented computational power.

As AI models have grown more sophisticated, the demand for more powerful hardware has increased. The next-generation AI GPU builds on Nvidia’s legacy, improving key areas such as performance, energy consumption, and real-time inference capabilities. This release comes at a critical time when industries across the board are increasingly adopting AI to drive innovation and efficiency.

Nvidia’s next-gen AI GPU promises to push the boundaries of machine learning and AI workloads. Officially revealed on February 22, 2026, the new GPU is designed to accelerate deep learning, AI research, and real-time inference tasks. Unlike previous iterations, this GPU integrates several new technologies aimed at maximizing both performance and energy efficiency, crucial for handling increasingly complex and larger datasets.

Key highlights of the announcement include:

The new GPU will be available to developers starting in March 2026, with full mass availability expected by Q2 2026.

Here’s a detailed breakdown of the key technical features that set the new Nvidia GPU apart:

Nvidia’s new GPU is set to make waves across multiple sectors. The improvements in processing power and energy efficiency will have significant implications for industries such as:

Competitor Reactions:

While Nvidia remains the leader in the AI GPU market, competitors like AMD and Intel are ramping up their efforts to challenge Nvidia’s dominance. However, with Nvidia’s seamless integration of both hardware and software, it remains difficult for competitors to match the full ecosystem Nvidia provides.

From a technical perspective, this GPU is a remarkable achievement for Nvidia. Dr. Ethan Davis, an AI researcher at MIT, explains: “The improvements in processing power and efficiency are crucial for scaling AI applications, particularly as models become more complex. The ability to run multiple AI models simultaneously with such efficiency sets this GPU apart from others in the market.” Nvidia Next-Gen AI GPU

While this new GPU will undoubtedly accelerate AI innovation, the continued reliance on specialized hardware could raise concerns about accessibility for smaller enterprises and researchers with limited budgets. However, Nvidia’s emphasis on energy efficiency could help alleviate some of these concerns, especially in large-scale deployments where energy costs are a major factor.

For developers, the next-gen AI GPU offers a significant performance upgrade, enabling faster training and inference for machine learning models. Developers will be able to handle more complex models and larger datasets, driving innovation in AI applications across industries. Additionally, the improved energy efficiency will help reduce operational costs, making it a more attractive option for businesses and research institutions focused on sustainability.

For consumers, the indirect impact will be seen through faster, more reliable AI-driven products, such as virtual assistants, autonomous vehicles, and healthcare devices. As these technologies become more advanced, consumers will benefit from smarter, more personalized experiences.

While the new GPU offers numerous advantages, there are some potential drawbacks to consider:

Looking ahead, Nvidia will likely continue refining its AI hardware to keep pace with the rapid advancements in machine learning and artificial intelligence. Future models will likely build on the success of this GPU, with even more emphasis on scalability, energy efficiency, and AI-specific optimizations.

Nvidia is also expected to integrate its GPUs more deeply into emerging technologies, such as quantum computing and 5G-powered AI applications. As AI adoption continues to grow across industries, Nvidia’s hardware will play a pivotal role in shaping the future of AI technology.

The main risks include its high cost and the need for specialized infrastructure, which could limit access for smaller companies or developers.

The GPU will be available to developers in March 2026, with full availability expected by Q2 2026.

The new AI GPU offers a 40% performance boost and is 25% more energy-efficient, allowing it to handle more complex models and larger datasets faster.